U.S. electric utilities could be missing out on millions of dollars in value for their customers by using outdated grid modeling techniques, research has found. The finding has profound implications for grid planning in the energy transition, where granularity of data is critical for effective decision making.

Amid the gloom and doom of mainstream media coverage of climate change, it is easy to miss the good news that society may already have the technology to safely, reliably, and cost-effectively deliver the electricity needed to power our homes, industry, and vehicles with at least 80% fewer greenhouse gas emissions. However, the path from here to there is extremely complicated, and must be planned and executed with great care. Otherwise, utilities risk charging their customers too much and putting reliability at risk.

Following over a decade of rapid cost declines as well as the passage of the Inflation Reduction Act, it is expected that renewable resources such as wind, solar, and geothermal will increasingly form the backbone of the power grid. To back-up and balance these intermittent resources, battery storage, demand response, and flexible dispatchable thermal generating technologies are required. Reciprocating internal combustion engines (RICE), which today burn natural gas but are soon anticipated to be able to burn 100% clean hydrogen, are an example of the latter category.

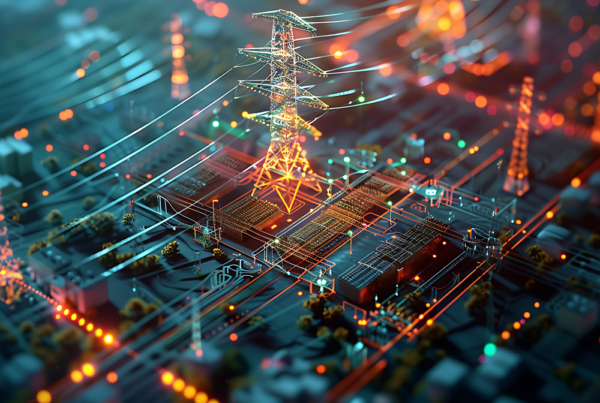

Planning a transition to a highly renewable grid that also maintains reliability without major cost increases necessitates the use of advanced analytical tools and techniques to accurately value modern power resources. In a world of increasing computing and digitization, the unfortunate truth is most utility planners still use outdated grid models built for an earlier era. Power system modeling must incorporate new capabilities, in particular Monte Carlo simulation with weather as a fundamental driver of load, renewable output, and forced outages as well as 5-minute simulation time-steps. These new capabilities correct systemic model bias towards legacy generation resource types and instead select for the highly flexible resources needed to integrate the massive amounts of renewable energy needed to decarbonize the power system.

Older modeling platforms use algorithms that output a single hourly simulation. For example, a twenty-year study is 8,760 hours per year times 20 years = 175,200 data points. A laptop can handle this “small data,” which is nice for ease of use but fails to leverage now widely available and affordable computing power. Simple isn’t always best, in fact, it can lead to making suboptimal decisions. The results generated by these types of legacy models are heavily biased toward the resource favorites of the past, combined cycle gas turbines and other “baseload” type resources that are not well equipped to follow the intermittent generation of renewables.

Conversely, they are systematically biased against the flexible integrating resources of the future that generate most of their value in the intra-hour time frame. Without a sub-hourly lens, you lose all view of the sub-hourly value.

Real-time power system models that calculate at an hourly level are the equivalent of trying to measure a car’s zero-to-sixty time using an egg-timer. Whether you’re timing a sports car or a semi-truck, the timer will present the same results: one minute. Even without consulting a supercomputer, we know that is just not right and undervalues the quickness and agility of the sports cars. Outdated grid modeling systems are likewise undervaluing flexible resources by failing to capture the emerging volatility inherent in a highly renewable system.

As renewables increasingly become a part of U.S. grids, utility planners should be planning the retirement of older inflexible power generation, to be replaced with renewable energy balanced by highly flexible and dispatchable resources such as batteries, demand response, and utility scale reciprocating internal combustion engines. In the case of RICE and batteries, the operational value generated by flexible resources over time is widely projected to overcome capital cost premiums over older technologies. Now all it takes are the right tools to see it.

David Millar is a Principal of Legislative, Markets and Regulatory Policy at Wärtsilä Energy. He has over two decades of experience as an economic analyst in the electric power sector, including roles in consulting, electric utility, and research organizations.

ABOUT UAI

Utility Analytics Institute (UAI) is a utility-led membership organization that provides support to the industry to advance the analytics profession and utility organizations of all types, sizes, and analytics maturity levels, as well as analytics professionals throughout every phase of their career. UAI membership includes over 100 utilities and utility agencies across North America and influential market leaders in the utility analytics industry. This community of analysts shares experiences and insights to learn from each other as well as other industries.

Check out UAI Communities and become a member to join the discussions at Utility Analytics Institute.